Your AI Agents Are Changing State. There's No Audit Trail.

68% of organizations can't tell if a production action was human or AI. New protocols formalize what production AI agent audit trails actually require.

68% of organizations cannot distinguish AI agent actions from human actions after the fact. Here is what production agent governance requires and why logging is not enough.

Two in three organizations cannot tell, after the fact, whether a given action in their production systems was taken by a human or an AI agent. That finding comes from a 2026 survey of over 900 security leaders and practitioners, and it should reframe how every VP of Engineering thinks about AI agent deployment.

Your agents are restarting services. Changing configurations. Rolling back deployments. And when something goes wrong, nobody can reconstruct what the agent did, why it did it, or under what authority.

Key Takeaways

- 68% of organizations cannot distinguish AI agent actions from human actions after the fact.

- 33% lack evidence-quality audit trails entirely. 61% run fragmented infrastructure that cannot produce actionable evidence.

- A new protocol (OpenKedge) formalizes what production agent governance requires: intent proposals, execution contracts, and cryptographic evidence chains.

- The EU AI Act reaches full enforcement August 2, 2026, with 72-hour incident reporting windows. Without audit trails, compliance is impossible.

- The fix is not more logging. It is linking intent to execution to outcome in a verifiable, tamper-evident chain.

The Three Mutations Nobody Tracks

AI agents in production are not just reading data. They are changing state. And the categories of mutation that matter most are exactly the ones where forensic evidence is thinnest.

Service restarts

When an agent decides a service needs to restart, what was the reasoning? What metrics did it evaluate? What alternatives did it consider? Your monitoring shows the restart event. It does not show the decision process. During a post-mortem, the question is never "did the service restart?" It is "should it have?"

Configuration changes

An agent adjusts a rate limit, modifies a feature flag, or updates a connection pool size. These changes are low-visibility and high-impact. They often look correct in isolation but create cascading effects that only surface hours later. Without a record of the agent's intent and the context it evaluated, debugging the cascade starts from zero.

Rollbacks

An agent detects elevated error rates and rolls back the most recent deployment. Was that the right call? Did the error rate correlate with the deploy, or was it caused by something upstream? If the rollback itself caused an incident, who authorized it? The rollback log says "deployment X reverted." It does not say why.

These are not edge cases. They are the daily operational actions that AI agents already perform in production. And for most organizations, the forensic evidence for each of these is a timestamp and an API call in a log file.

The Evidence Gap

The gap between what AI agents do in production and what teams can reconstruct afterward is one of the widest in enterprise technology.

| Metric | Number | Source |

|---|---|---|

| Cannot distinguish AI vs. human actions | 68% | CSA/RSAC 2026 |

| Lack evidence-quality audit trails | 33% | Kiteworks 2026 |

| Fragmented infrastructure, cannot produce evidence | 61% | Kiteworks 2026 |

| Cannot enforce limits on AI agent data access | 63% | Kiteworks 2026 |

| Full visibility into agent-to-agent communication | 24.4% | AGAT Software 2026 |

| Agents running without security oversight or logging | >50% | AGAT Software 2026 |

| Automated controls operating at machine speed | 3% | Teleport 2026 |

| Expect major AI agent incident within 12 months | 97% | CSA/RSAC 2026 |

| Reported confirmed or suspected AI agent incidents | 88% | AGAT Software 2026 |

That last row is worth pausing on. 88% have already experienced an AI agent incident, and 97% expect another one. Yet most cannot produce the evidence needed to investigate what went wrong.

What an Evidence Chain Actually Looks Like

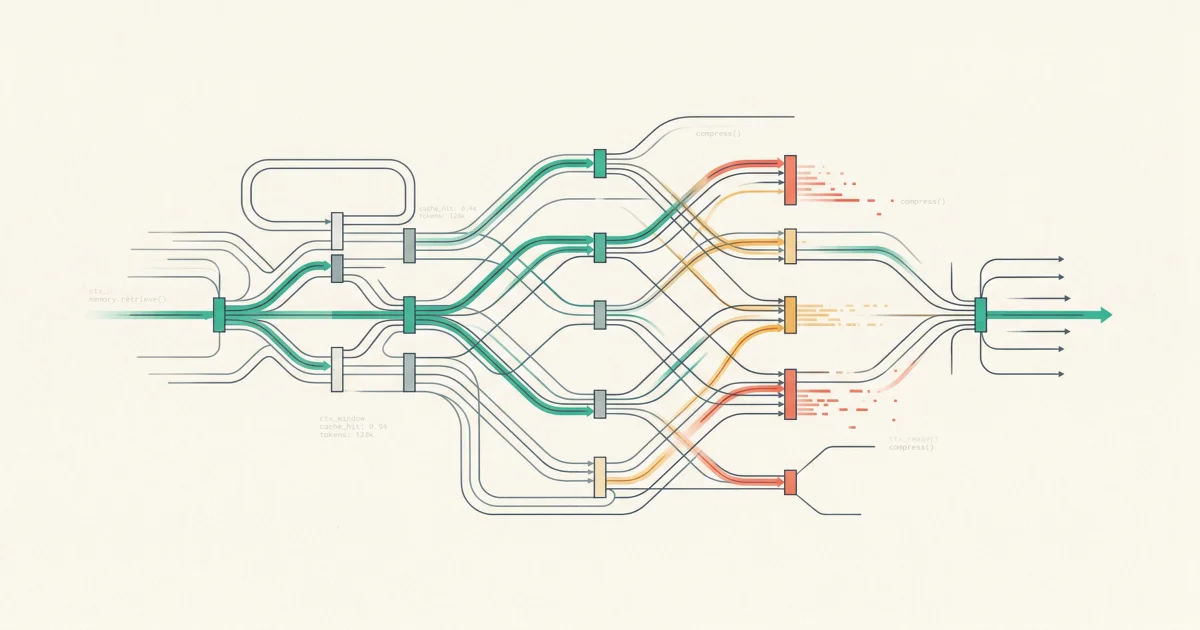

A paper published this week on arXiv introduces OpenKedge, a protocol that formalizes what production agent governance actually requires. The core insight: safety needs to shift from reactive filtering to preventative, execution-bound enforcement.

OpenKedge structures every agent mutation through three stages:

Declarative Intent Proposals

Before an agent executes any state change, it submits a structured intent request. The intent is evaluated against the current system state, temporal signals, and policy constraints. The agent does not call an API directly. It declares what it wants to do and why.

Execution Contracts

Approved intents compile into contracts that bound the permitted actions, resource scope, and time window. The agent receives an ephemeral, task-specific identity for the execution. If the contract expires or the agent exceeds its boundaries, the execution halts.

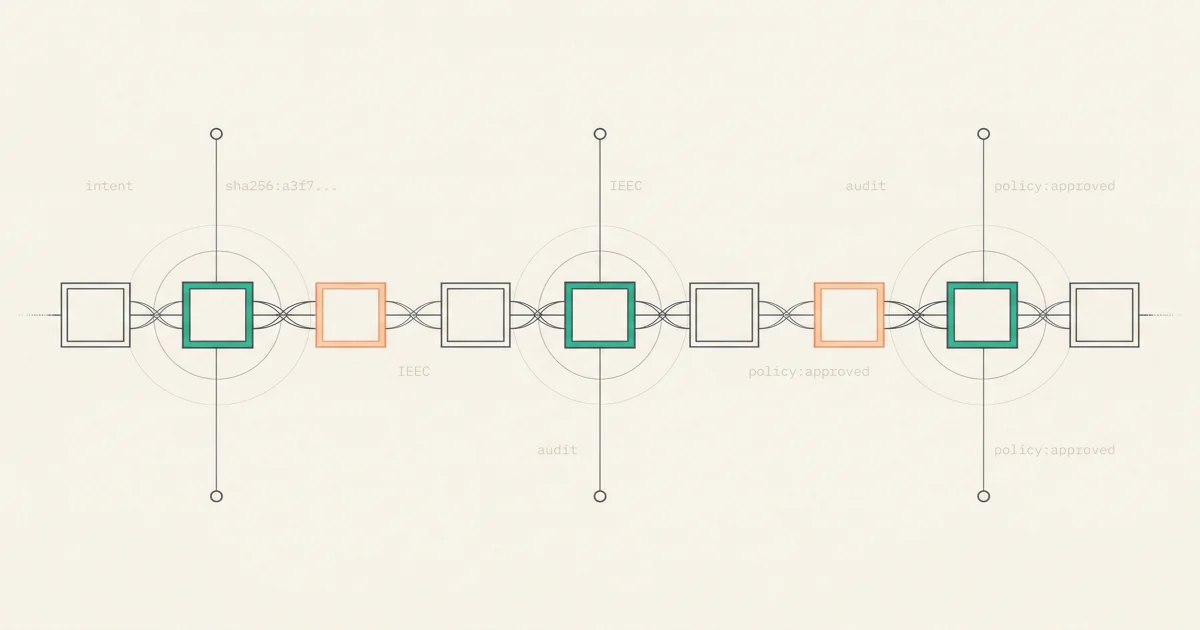

Intent-to-Execution Evidence Chain

Every mutation is cryptographically linked across five elements: the intent proposal, the contextual state evaluation, the policy decision, the execution boundaries, and the actual outcome. This creates what the authors call "a unified lineage" that enables "deterministic auditability and reasoning about system behavior."

This is not theoretical. Asqav, an open-source SDK released this month, implements a similar model. Every agent action is signed with a quantum-safe ML-DSA-65 signature and hash-chained to the previous action. If anyone modifies an entry or removes one from the sequence, the verification chain breaks immediately. The SDK supports policy enforcement that evaluates action patterns before execution, and multi-party signing that requires minimum approvals for critical operations.

These are the building blocks of production agent governance. Intent. Boundaries. Evidence. Verification.

Why Logging Is Not Auditing

Most teams believe their existing observability stack covers AI agent actions. It does not.

Logs capture what happened, not why

Your structured logs show that an agent restarted a service at 03:14 UTC. They do not show that the agent evaluated three metrics, considered two alternative actions, and chose the restart because of a specific policy threshold. When the post-mortem asks "was this the right call?", the log entry has no answer.

Permissions are not governance

74% of AI agents are provisioned with more permissions than their tasks require. But even correctly scoped permissions only answer "could this agent do this?" They do not answer "should it have?" Governance requires linking the action to the reasoning and the policy that authorized it. Permissions alone are a necessary but insufficient layer. This is the same over-privilege pattern that drives 4.5x higher incident rates.

Compliance certifications are lagging indicators

SOC 2 certifications and ISO 27001 badges tell you what controls existed at audit time. They do not tell you whether those controls caught an agent making unauthorized changes last Tuesday. As the AI supply chain trust gap has shown, certifications are point-in-time attestations, not runtime guarantees.

The Regulatory Clock

The EU AI Act reaches full enforcement on August 2, 2026. For high-risk AI systems, the requirements include conformity assessments, human oversight mechanisms, and technical documentation ready for inspection.

The 72-hour incident reporting window is where audit trails become existential. When a regulator asks "what did this agent do during the incident?", you need to produce a verifiable timeline within three days. Not a log file. A forensic chain that links the agent's intent to its context to its actions to their outcomes.

For organizations deploying AI agents that modify production infrastructure, the regulatory question is straightforward: can you reconstruct what your agents did, why, and under what authority? If the answer is no, August 2 is your deadline.

What to Do This Week

-

Inventory your agent mutations. List every AI agent in production that can change state: restart services, modify configs, deploy code, rollback changes. For each one, document what forensic evidence exists for those actions today.

-

Test your reconstruction capability. Pick an agent action from last week. Try to answer: what did it do, why, what alternatives did it consider, and what policy authorized it? If you cannot answer all four, your audit trail has gaps.

-

Separate intent from execution. Even before adopting a formal protocol, log the agent's reasoning separately from the API call it makes. Capture the metrics evaluated, the decision criteria, and the alternatives considered. This is the minimum viable evidence chain.

-

Scope your blast radius. For each state-changing agent, answer: if this agent malfunctions, what is the worst it can do? Match the governance investment to the blast radius. An agent that reads logs needs less evidence infrastructure than one that restarts services with broad permissions.

-

Set a compliance timeline. If you operate in the EU or serve EU customers, map your AI agent actions against the EU AI Act's high-risk categories. Identify which agents need conformity assessments and start the documentation now. August 2 is less than four months away.

The Real Lesson

The organizations that will navigate AI agent governance successfully are not the ones deploying the fewest agents. They are the ones that can prove what their agents did and why.

Logging tells you what happened. An evidence chain tells you whether it should have. That distinction is the difference between an incident report and a compliance violation.

The tools and protocols for building production-grade evidence chains exist today. OpenKedge formalizes the architecture. Asqav provides an open-source implementation. The question is no longer technical. It is whether your team treats agent auditability as infrastructure or as an afterthought.

Every agent that changes production state should leave a forensic trail that a staff SRE can follow during a post-mortem, and that a regulator can verify during an audit. If your agents cannot do that today, the gap is not in your monitoring. It is in your governance architecture.

Frequently Asked Questions

What is an AI agent audit trail?

An AI agent audit trail is a forensic record that links every agent action to the intent that triggered it, the context evaluated, the policy decision made, the execution boundaries applied, and the actual outcome. It answers five questions for any agent action: what happened, why, under what authority, within what constraints, and with what result.

Why can't standard logging replace an AI agent audit trail?

Standard logging captures what happened but not why. When an AI agent restarts a service, your logs show the restart. They do not show the agent's reasoning, the alternative actions considered, or the policy that authorized the action. During an incident review, this gap makes it impossible to determine whether the agent acted correctly.

How does the EU AI Act affect AI agent audit requirements?

The EU AI Act reaches full enforcement on August 2, 2026. High-risk AI systems must maintain technical documentation, human oversight mechanisms, and conformity assessments. The 72-hour incident reporting window means you need to reconstruct exactly what an agent did and why within three days. Without an evidence chain, meeting this timeline is nearly impossible.

What is an Intent-to-Execution Evidence Chain?

An Intent-to-Execution Evidence Chain (IEEC), introduced in the OpenKedge protocol, cryptographically links five elements of every agent action: the intent proposal, the contextual state evaluation, the policy decision, the execution boundaries, and the actual outcome. This creates a verifiable, tamper-evident record that enables deterministic auditability.

What percentage of organizations have adequate AI agent audit trails?

33% of organizations lack evidence-quality audit trails entirely, according to Kiteworks' 2026 research. 61% run fragmented data exchange infrastructure that cannot produce actionable evidence. Only 24.4% have full visibility into agent-to-agent communication. The gap between AI agent deployment and auditability is one of the widest in enterprise technology.

Evidence Chains for Every Investigation

TierZero Production Agents produce a full forensic trail for every action. Every investigation shows cited evidence, every remediation requires approval, and the complete intent-to-execution chain is auditable. Deploys on-prem for regulated industries.

Co-Founder & CEO at TierZero AI

Previously Director of Engineering at Niantic. CTO of Mayhem.gg (acq. Niantic). Owned social infrastructure for 50M+ daily players. Tech Lead for Meta Business Manager.

LinkedIn