The Invisible Tax Your AI Agents Pay on Every Call

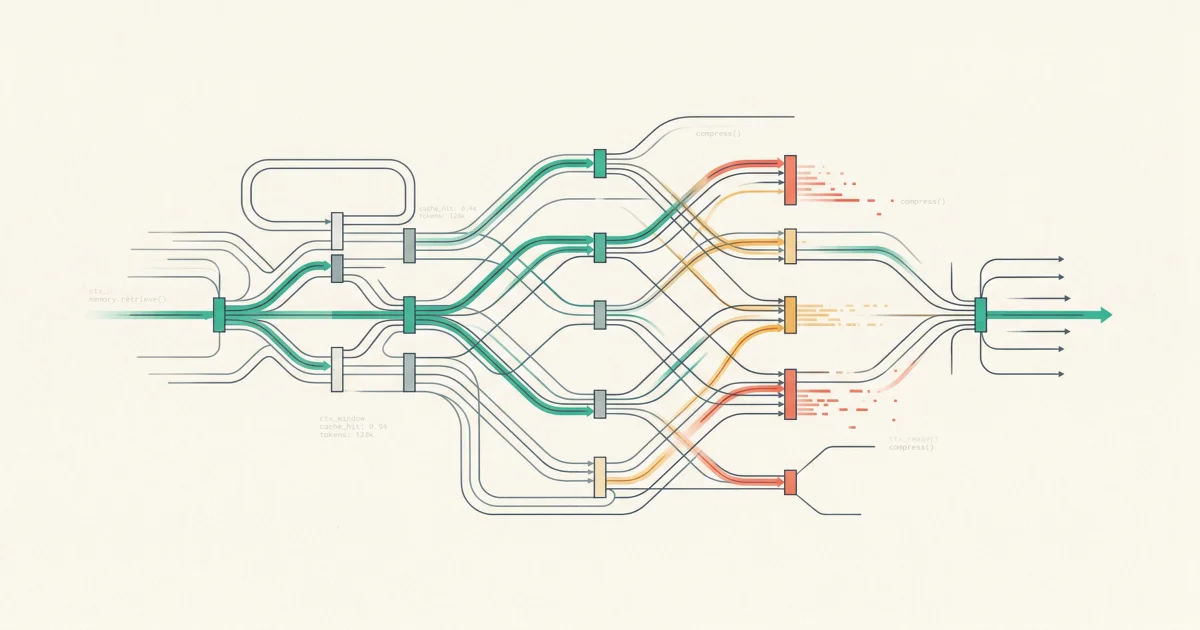

Stateless HTTP transport creates quadratic cost growth for AI agents. Benchmarks show stateful continuation reduces bandwidth 82% and latency 29%. The optimization guide for engineering leaders.

Every round trip in a stateless agent loop re-sends the full conversation. Stateful continuation cuts bandwidth by 82% and execution time by 29%. Here is the optimization stack most teams are missing.

A 10-turn agent conversation does not cost 10x a single turn. It costs closer to 55x.

Every round trip in a stateless HTTP call re-sends the full conversation history: system instructions, tool definitions, every prior model output, every tool call result. For a chatbot, that overhead is annoying. For an agent running 50 tool calls per task, it is the single largest hidden cost in your AI infrastructure.

New benchmarks published this week quantify what most teams have not measured: the transport layer between your agent and the model API is silently consuming 82% more bandwidth than necessary. And for most production agent systems, nobody is looking at it.

Key Takeaways

The Compounding Problem

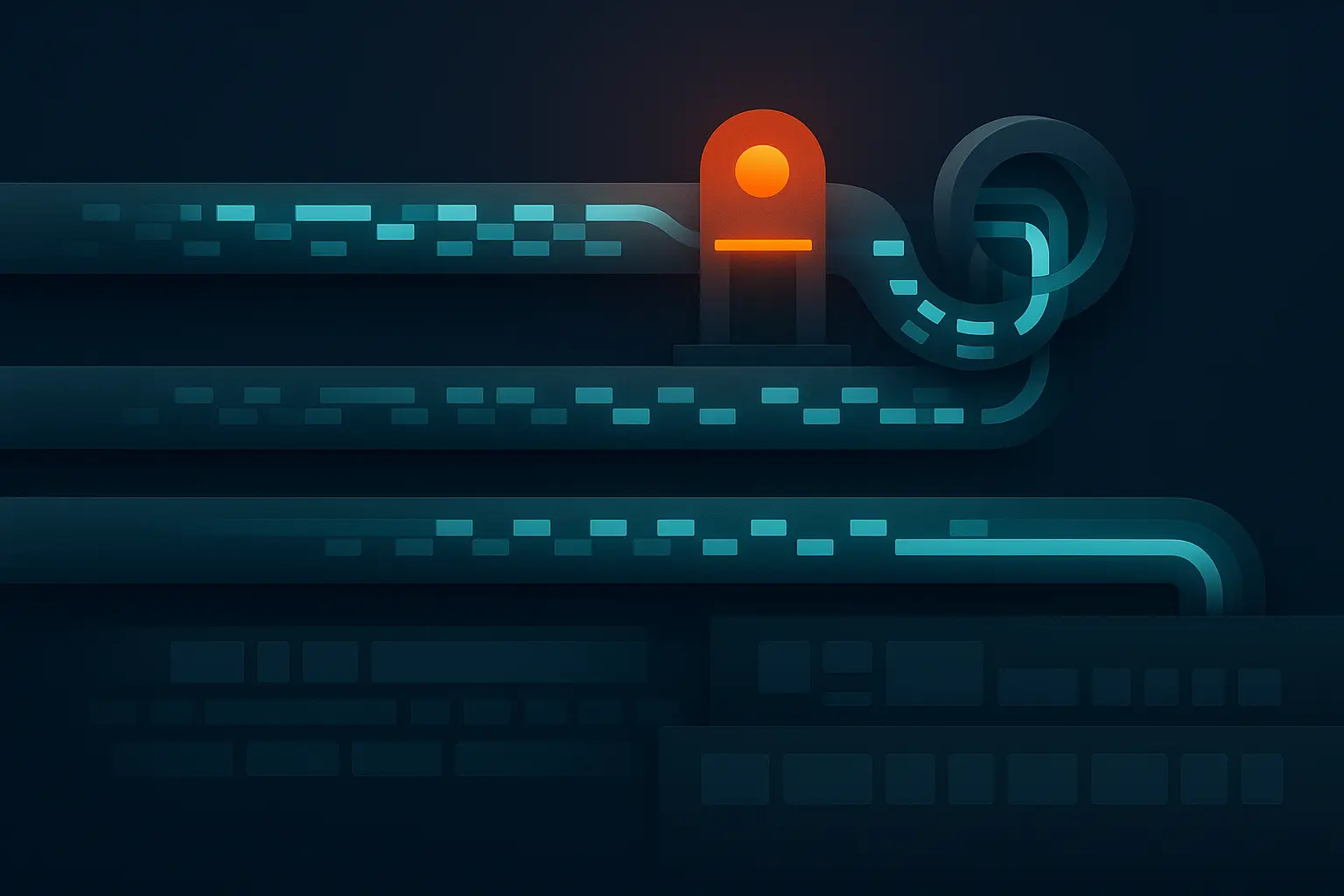

Standard LLM chat is stateless by design. Each API call includes everything the model needs to generate the next response. For a single-turn question, this is fine. The overhead is a few kilobytes.

Agents break this model. A production agent investigating an incident might make 50 tool calls: pulling logs from Datadog, reading recent deployments from GitHub, checking alert history in PagerDuty, querying the knowledge base. Each of those calls re-sends the full context.

The InfoQ analysis breaks down what gets retransmitted on every HTTP round trip:

- System instructions and tool definitions (roughly 2 KB)

- The original user prompt

- Every prior model output

- Every tool call result

By turn 10, you are sending turns 1 through 9 again. By turn 50, you are sending turns 1 through 49. The cost curve is not linear. It is quadratic.

A 20-turn conversation consumes 5,000 to 10,000 tokens when only 500 to 1,000 tokens of recent context would suffice. That is 80-90% waste on context that the model has already processed.

How Big Is the Tax?

The InfoQ benchmarks tested a multi-turn agentic coding task across transport protocols. The results are not subtle.

| Metric | HTTP (Stateless) | WebSocket (Stateful) | Improvement |

|---|---|---|---|

| End-to-end execution (GPT-5.4) | Baseline | 29% faster | -29% time |

| Client-sent data (GPT-5.4) | 176 KB per task | 32 KB per task | -82% bandwidth |

| First token latency (GPT-5.4) | Baseline | 14% lower | -14% TTFT |

| Client-sent data (GPT-4o-mini) | Baseline | 86% less | -86% bandwidth |

| Total time (GPT-4o-mini) | Baseline | 15% faster | -15% time |

At estimated scale of 1 million concurrent agentic sessions, the difference is 176 GB versus 32 GB of ingress traffic per 40-second task window. That is 144 GB of data that exists only because the transport protocol requires re-sending context the server already has.

The speed improvement comes from server-side state management: the WebSocket server stores the most recent response in connection-local volatile memory, enabling near-instant continuation without re-tokenizing the full conversation.

Three Layers of the Tax

Transport overhead is the most visible layer, but it is not the only one. Production agents pay a compounding tax at three levels.

Layer 1: Transport

Every HTTP round trip re-serializes and re-transmits the full conversation. For agents averaging 176 KB per task over HTTP versus 32 KB over stateful WebSocket, this is the raw bandwidth cost. It scales linearly with concurrent sessions and quadratically with conversation depth.

Layer 2: Context Tokenization

Re-sending context is only half the problem. The model must re-tokenize and re-process the full conversation on every call. Without prompt caching, this means re-computing attention over the entire history. Prompt caching eliminates this, reducing time-to-first-token by roughly 80% and input costs by 90%. But most production agent systems are not using it. The model provider charges you for tokens whether or not they carry new information.

Layer 3: Tooling Metadata

Agent frameworks define tools with JSON schemas, descriptions, and parameter specifications. MCP tool metadata can consume 40-50% of the available context window. A detailed 500-token system prompt multiplied across 1,000 API calls per day adds up to 500,000 tokens just for instructions. Dynamic tool loading, where agents only load relevant tool definitions per turn, can reduce this overhead by 85%.

The compounding effect across all three layers is what makes agent costs unpredictable. Agentic systems consume 5-30x more tokens per task than a standard chat interaction. Complex multi-agent systems can reach 200,000 to over 1,000,000 tokens per task. An unconstrained agent solving a software engineering issue can cost $5 to $8 per task, and multi-step research agents can burn through $5 to $15 in minutes.

What Stateful Continuation Actually Requires

Switching from stateless HTTP to stateful continuation is not just a protocol change. It is an infrastructure decision with real tradeoffs.

Stateful continuation works by keeping the conversation state on the server. Instead of the client re-sending everything, it sends a previous_response_id referencing the cached state (roughly 60 bytes) plus only the new tool outputs (1-3 KB). The server re-hydrates the conversation from its cache and continues.

This requires:

-

Server-side session management. The model API server must maintain per-connection state in volatile memory. This conflicts with stateless load balancing and horizontal scaling patterns that most cloud infrastructure relies on.

-

Reconnect handling. WebSocket connections drop. The system needs graceful fallback when the cached state is lost, which typically means re-sending the full context as a recovery path.

-

Provider support. As of April 2026, the landscape is uneven:

| Provider | Stateful Support | Notes |

|---|---|---|

| OpenAI Responses API | Yes | Since February 2026 |

| Google Gemini | Partial | Audio/video streams only |

| Anthropic Claude | No | Uses Server-Sent Events |

| Ollama / vLLM | No | No WebSocket support |

The MCP specification is evolving toward stateless-friendly patterns specifically because stateful sessions create friction at scale. Managed services and load balancers fight with connection-pinned state. The 2026 roadmap includes changes to let servers scale horizontally without holding state, with specification updates tentatively slated for June 2026.

The Optimization Stack You Can Use Today

You do not need to wait for protocol changes. Most of the transport tax is addressable with techniques available right now.

Prompt caching is the highest-leverage optimization. Cached tokens cost approximately 10% of the full price for non-cached tokens. For a coding assistant analyzing a 50,000-token repository, caching means the repository context is processed once and reused across all follow-up queries. Time-to-first-token drops by roughly 80%.

Conversation summarization prevents quadratic context growth. Instead of appending every turn to the conversation, periodically summarize older turns and replace them with a compressed representation. This keeps the context window focused on recent, relevant information. Dynamic turn limits can cut costs by 24% while maintaining solve rates.

Semantic caching catches repeated or similar queries before they reach the model. In high-repetition workloads, semantic caching delivers up to 73% cost reduction by returning cached responses in milliseconds instead of making fresh API calls.

Dynamic tool loading reduces the tooling metadata tax. Instead of sending all tool definitions on every call, load only the tools relevant to the current step. RAG-based tool selection improves accuracy by approximately 3x while dramatically shrinking the context overhead.

What to Do This Week

-

Measure your actual token spend per agent task. Not the API bill. The token count. Break it down by input versus output, cached versus uncached, and tool definitions versus conversation content. If you cannot do this, you are optimizing blind.

-

Enable prompt caching. If your provider supports it and you are not using it, you are leaving 90% cost savings on the table. This is the single highest-ROI change for most agent systems.

-

Audit your tool definitions. Count how many tokens your tool schemas consume per call. If it is more than 20% of your context window, implement dynamic tool loading or trim the schema definitions.

-

Implement conversation summarization. Set a threshold (context utilization at 60-70%) where older turns get summarized and replaced. Do not wait until you hit the context window limit. Context rot degrades accuracy well before overflow.

-

Benchmark your transport layer. Run the same agent task over HTTP and, if your provider supports it, over a stateful connection. Measure total bytes sent, execution time, and cost per task. The delta tells you whether transport optimization is worth prioritizing or whether your overhead is elsewhere.

The Real Lesson

Most teams optimizing AI agents focus on prompt engineering and model selection. Those matter. But if your agent is re-sending 80% redundant context on every call, running 50 tool calls per task, and paying full price for tokens the model already processed, the transport layer is where the money is going.

The best-optimized prompt in the world does not help if you are transmitting it 50 times.

This is an infrastructure problem, not a model problem. And like most infrastructure problems, the teams that measure it first are the ones that fix it first.

What is the transport tax for AI agents?

The transport tax is the cumulative overhead of re-sending conversation context on every API call in a stateless protocol. For multi-turn agents, each call includes the full history of prior turns, system instructions, and tool definitions. This creates quadratic cost growth: a 10-turn conversation costs 55x a single turn, not 10x. Stateful continuation reduces this overhead by 82-86%.

How does prompt caching reduce agent costs?

Prompt caching stores the processed state of static prompt components (system instructions, tool definitions, reference documents) on the server. Subsequent calls reference the cache instead of re-processing these tokens. This reduces input costs by approximately 90% and time-to-first-token by roughly 80%. Both Anthropic and OpenAI offer prompt caching, though implementation details differ.

Why do agentic systems cost 5-30x more than chat?

Chat interactions are typically single-turn or short multi-turn. Agents run multi-step workflows with dozens of tool calls, each of which expands the conversation history. Multi-agent systems can use up to 15x more tokens than single-agent chat. The combination of quadratic context growth, tool metadata overhead, and the need for high accuracy (requiring more turns) compounds into dramatically higher costs.

Can I use stateful continuation with Anthropic Claude?

Not yet. As of April 2026, Anthropic uses Server-Sent Events for streaming responses but does not support server-side conversation state retention through WebSocket connections. OpenAI's Responses API (launched February 2026) is currently the only major provider offering full stateful continuation. Prompt caching is available from both providers and addresses the tokenization layer of the overhead.

How do I know if my agent is transport-bottlenecked?

Measure three things: total bytes sent per task (compare against the actual new information per turn), input token cost as a percentage of total cost (if input tokens dominate, you are paying the retransmission tax), and time-to-first-token across turns (if TTFT increases with conversation depth, you are re-processing cached context). If any of these metrics scale with conversation length rather than staying flat, transport is your bottleneck.

Stop Re-Sending What Your Agents Already Know

TierZero's Context Engine uses hybrid search, graph traversal, and progressive compression to give production agents exactly the context they need on each turn. No redundant retransmission. No context rot. Every investigation gets faster, not more expensive.

Co-Founder & CEO at TierZero AI

Previously Director of Engineering at Niantic. CTO of Mayhem.gg (acq. Niantic). Owned social infrastructure for 50M+ daily players. Tech Lead for Meta Business Manager.

LinkedIn