Your AI Provider Had 5 Outages Last Month. Now What?

AI APIs averaged more incidents per service than any other category in early 2026. A guide for engineering leaders on detection, fallbacks, and building production resilience.

AI and ML APIs are the least reliable API category tracked across 215+ services. Here is what engineering leaders need to do about it before their next provider incident.

Anthropic shipped 14 features in March 2026. They also had 5 production outages. OpenAI logged 11 incidents in 28 days in January. GitHub's uptime dropped to 90.21% over a 90-day window as AI coding agents hammered their infrastructure.

If you are building on AI infrastructure, this is your new normal.

Key Takeaways

The Fastest-Moving Dependencies You Have Ever Had

Your database vendor ships a major release once or twice a year. Your cloud provider changes APIs quarterly. Your AI provider ships 14 features in a month and breaks production 5 times in the process.

This is the fundamental tension in AI infrastructure right now. Providers are in an arms race. They are shipping models, APIs, and capabilities at a pace that makes SaaS release cycles look glacial. And every team building on top of them inherits that velocity, along with the instability it creates.

The Nordic APIs reliability report covering October 2025 through February 2026 tracked 215+ services. AI and ML APIs came in dead last for reliability. Not by a small margin. OpenAI logged one incident every 2.5 days in January 2026. Anthropic had multiple incidents per week, including a 30-hour resolution cycle for Claude Opus 4.5 in late January.

Compare that to payments infrastructure. Stripe runs at roughly 99.99% uptime. Linear sits at 99.96%. OpenAI's API components dipped to 98.89% during one stretch. That is a different universe of reliability.

The Numbers Tell the Story

| Provider / Platform | Incidents | Period | Notes |

|---|---|---|---|

| OpenAI | 11 incidents | January 2026 (28 days) | One every 2.5 days. Most resolved in 30-90 min. |

| Anthropic | Multiple per week | Late Jan 2026 | Claude Opus 4.5 had a 30-hour resolution cycle. |

| GitHub | 37 in Feb, 28 in Mar | Feb-Mar 2026 | 90.21% uptime over 90 days. AI agents amplified load. |

| AWS DynamoDB | 1 major incident | October 2025 | Cascaded into 141 affected services. |

| Cloudflare | 1 major outage | November 2025 | Took down thousands of sites including parts of OpenAI. |

Sources: Nordic APIs 2026 Report, GitHub Reliability Analysis

The pattern is clear. AI infrastructure is shipping fast and breaking often. And the blast radius is growing because AI is now load-bearing in production workflows. A GitHub outage in 2024 was annoying. A GitHub outage in 2026, when AI coding agents are running CI pipelines every few minutes, cascades into hours of lost engineering productivity.

Three Failure Modes You Need to Plan For

Most teams think about AI provider outages as binary: it is up or it is down. The reality is messier.

1. Hard downtime

The API returns 5xx errors or times out. This is the easy one to detect. Your monitoring catches it. The problem is that most teams have no automated fallback. Engineers open the status page, wait, and context-switch to something else. A 50-person engineering team paying developers $80 to $150 per hour loses $4,000 to $7,500 for every hour a critical dependency is down.

2. Degraded quality

The API returns 200 OK but the output is wrong, slow, or truncated. This is harder to catch because your health checks pass. The model is technically responding. It is just responding badly. Latency spikes from 500ms to 8 seconds. Output quality drops. Structured responses start failing schema validation.

According to the 2025 DORA report, only 24% of respondents trust AI-generated output "a lot." When degraded quality incidents happen silently, that trust erodes even faster.

3. Cascade failures

Your AI provider is fine, but something upstream broke. The October 2025 AWS DynamoDB incident took out 141 services. Cloudflare's November outage brought down parts of OpenAI itself. Your AI dependency chain is deeper than you think, and a failure anywhere in it reaches your users.

What Your On-Call Runbook Is Missing

Most incident runbooks were written for services you operate. They assume you can SSH into the box, read the logs, and fix the code. When your AI provider degrades, none of that applies. You are debugging someone else's infrastructure with almost no visibility.

Here is what your AI provider incident runbook should include:

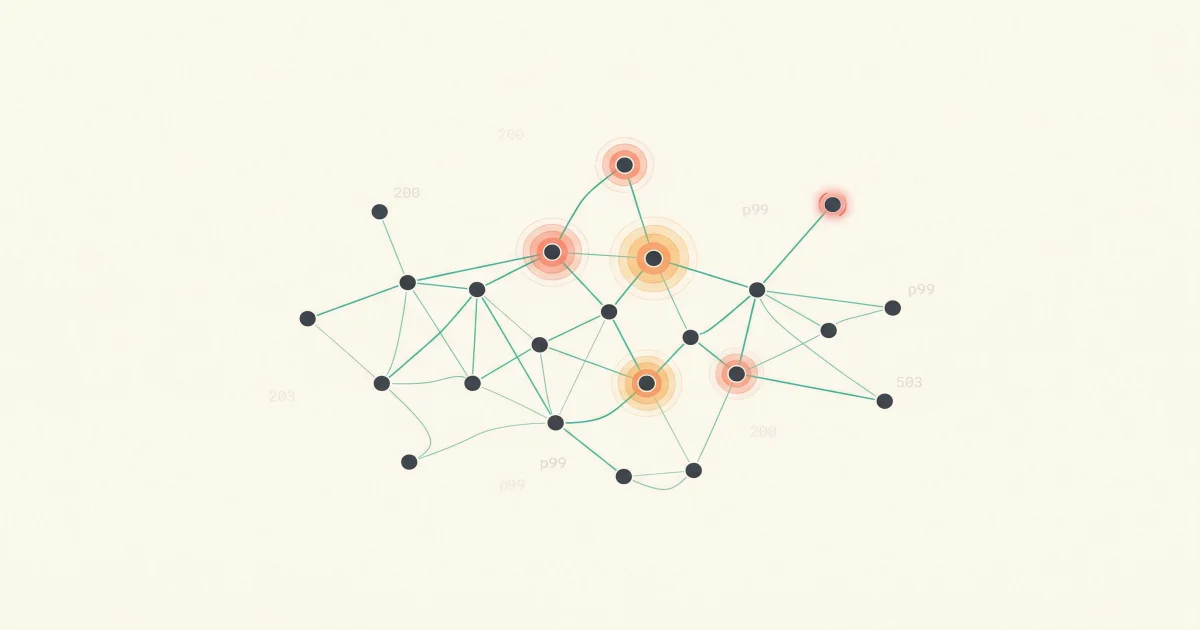

Detection

Set up synthetic probes that test actual model responses, not just endpoint health. A latency spike from 500ms to 4 seconds is an incident even if the status page says "operational." Track error rate, p50/p95/p99 latency, and output quality as separate signals.

Triage

Classify by blast radius. Which production features depend on this provider? Can they degrade gracefully? Do they have fallbacks? The answer for most teams right now is "no" to both questions.

Mitigation

Multi-provider routing should activate automatically, not manually. If your fallback requires an engineer to change a config and redeploy, it is not a real fallback. Use an AI gateway with automatic failover. Test the failover path monthly.

Communication

Your users do not care which provider broke. They care that your product is broken. Have pre-written status page templates for "AI feature degraded" that do not blame your upstream provider.

Building Resilience Into Your AI Stack

The CockroachLabs State of AI Infrastructure report found that 63% of infrastructure leaders say their leadership teams underestimate how quickly AI demands will outpace existing infrastructure. And 77% expect AI to drive at least 10% of all service disruptions in the coming year.

Those numbers mean this is not a problem you can defer. Here is what to prioritize:

Multi-provider routing. Treat this like you treat multi-AZ deployments. No single point of failure. Define primary and fallback models for each use case. Use an AI gateway that handles routing, failover, and cost tracking in one layer. LangChain's State of AI Agents report found 89% of teams with production agents have already invested in observability. Routing should be next.

AI-specific observability. Traditional APM does not catch AI quality degradation. You need monitoring that tracks response quality, not just response codes. Set up alerts on latency percentiles, token throughput, and schema validation failure rates. When a provider degrades silently, the first sign is often in the output, not the status code.

Graceful degradation. Every AI-powered feature should have a defined behavior for when the AI is unavailable. Show cached results. Fall back to rule-based logic. Display a clear message. Crashing or hanging is not acceptable.

Tested fallback paths. Chaos engineering for AI dependencies. Kill the primary provider in staging every month. If your fallback path breaks the first time you actually need it, you never had a fallback. The production teams that handle provider incidents calmly are the ones who have practiced their response before the real incident hits.

What To Do This Week

-

Inventory your AI dependencies. List every AI provider your production services call. Include transitive dependencies through third-party tools that use AI under the hood. You probably have more than you think.

-

Check your monitoring. Can you detect a 3x latency spike from your AI provider within 5 minutes? If not, add synthetic probes that test actual model responses.

-

Define degraded behavior. For each AI-powered feature, document what happens when the provider is down. If the answer is "the feature breaks," fix that first.

-

Set up multi-provider routing. Even if you only use one provider today, adding a fallback model takes hours and could save you the next time your primary provider has a bad week.

-

Run a tabletop exercise. Walk your on-call team through this scenario: "Your AI provider's API is returning 200 OK but the output quality has dropped significantly. How do you detect it? What do you do?" If nobody has an answer, that is your priority.

The Velocity Mismatch Is the Real Problem

The tension between AI development speed and production reliability is the defining infrastructure challenge of 2026. Code is getting written faster than ever. AI tools are accelerating every phase of development. But production operations have not kept pace.

Your monitoring was designed for services you control. Your runbooks assume you can read the logs. Your on-call rotations assume failures are binary. None of that holds when your critical dependencies ship 14 features a month and break 5 times along the way.

Engineering teams that build AI infrastructure resilience now will ship faster with confidence. Teams that treat provider reliability as someone else's problem will keep getting surprised at 3 AM.

Frequently Asked Questions

How do I build fallback strategies for AI API outages?

Start with multi-provider routing at the gateway level. Define a primary and secondary model for each use case. Use circuit breakers that trip on latency spikes, not just 5xx errors. Test your fallback path monthly because providers change their APIs more often than you think.

Should I self-host AI models to avoid provider outages?

Only if you have the GPU infrastructure and the team to maintain it. Self-hosting eliminates upstream dependency risk but creates a new class of operational problems. For most teams, multi-provider routing through an AI gateway is simpler and more cost-effective.

What metrics should I track for AI provider reliability?

Track error rate, p50/p95/p99 latency, and output quality separately. A provider can return 200 OK while giving you degraded output. Set up synthetic probes that test actual model responses, not just endpoint availability.

How often do major AI providers have outages?

In early 2026, OpenAI averaged one incident every 2.5 days. Anthropic had multiple incidents per week. AI and ML APIs are the least reliable API category across 215+ services tracked by Nordic APIs. Plan accordingly.

What is the cost of AI provider downtime for engineering teams?

It depends on how deeply AI is integrated into your workflows. A 50-person engineering team loses $4,000 to $7,500 per hour when a critical AI dependency goes down. The indirect cost is worse: broken CI pipelines, stalled deployments, and engineers context-switching to manual workarounds.

Stop Getting Surprised by Provider Outages

TierZero Production Agents detect infrastructure degradation across your entire stack and investigate incidents in under 7 minutes. When your AI dependency breaks at 3 AM, the investigation is done before your on-call engineer opens their laptop.

Co-Founder & CEO at TierZero AI

Previously Director of Engineering at Niantic. CTO of Mayhem.gg (acq. Niantic). Owned social infrastructure for 50M+ daily players. Tech Lead for Meta Business Manager.

LinkedIn