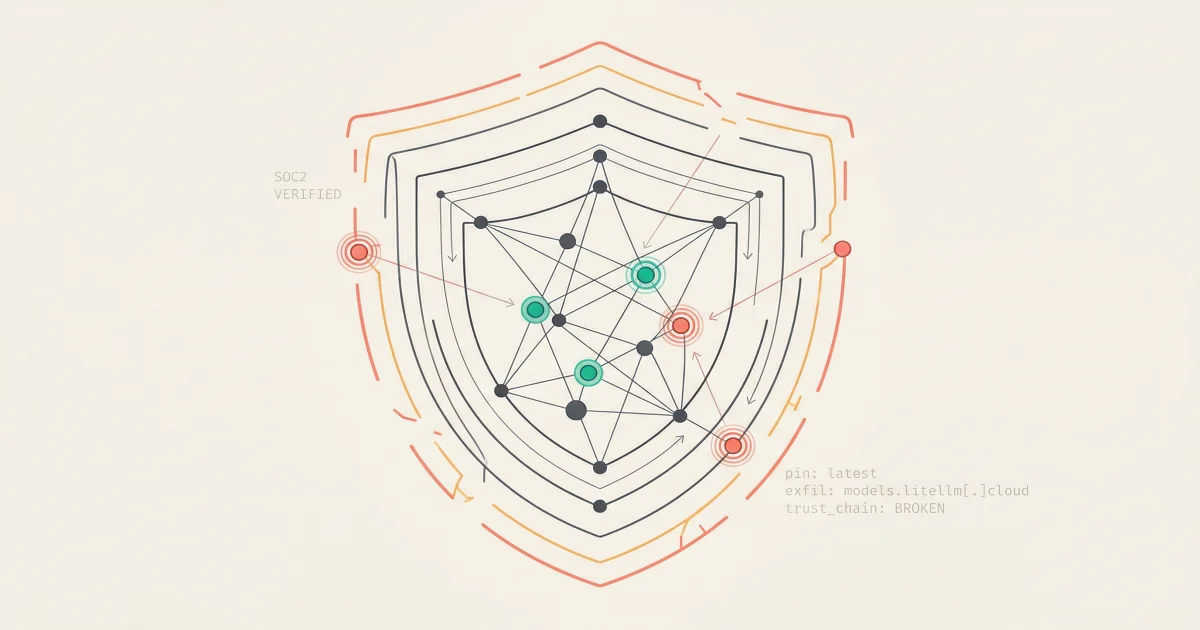

SOC 2 Won't Save You From Supply Chain Attacks

LiteLLM's supply chain attack and the Delve compliance scandal reveal why SOC 2 badges are lagging indicators and what behavioral detection looks like.

LiteLLM was SOC 2 certified when credential-stealing malware hit its supply chain. Its compliance vendor is accused of fabricating evidence. What the trust chain gap means for your AI infrastructure.

LiteLLM handles 3.4 million downloads per day. It had SOC 2 Type II and ISO 27001 certifications prominently displayed on its website. On March 24, 2026, credential-stealing malware shipped in two of its PyPI releases for three hours.

The certifications were supposed to prevent exactly this.

Worse: the company that certified LiteLLM, a Y Combinator startup called Delve, is now accused of fabricating compliance evidence entirely. The SOC 2 badge was not just insufficient. It may have been fake.

This is not a story about one startup getting hacked. It is a story about how trust chains work in AI infrastructure, and how most engineering teams have no visibility into whether those chains are intact.

Key Takeaways

The Week That Broke a Trust Chain

The timeline tells the story.

| Date | Event |

|---|---|

| March 19, 2026 | Attackers rewrite Git tags in the Trivy security scanner repository, pointing releases to malicious code |

| March 22, 2026 | Anonymous whistleblower "DeepDelver" accuses Delve of fake compliance, fabricated evidence, rubber-stamp auditors |

| March 23, 2026 | Attackers register models.litellm[.]cloud for data exfiltration. Insight Partners scrubs their Delve investment post |

| March 24, 2026 | Malicious LiteLLM versions 1.82.7 and 1.82.8 published to PyPI. Malware active for ~3 hours before quarantine |

| March 26, 2026 | Researcher Callum McMahon publishes discovery. Silicon Valley's two biggest dramas intersect |

| March 30, 2026 | LiteLLM ditches Delve, announces recertification through Vanta. DeepDelver releases alleged receipts |

Two separate failures converged. A technical supply chain attack. A compliance system that was allegedly faking its output. Neither was visible through the other's lens.

How the Attack Actually Worked

The technical analysis from Snyk reveals a five-step supply chain compromise.

Step 1: Compromise the security scanner. Attackers rewrote Git tags in the trivy-action repository to point to a malicious release. Trivy is a widely used open-source vulnerability scanner. LiteLLM's CI/CD pipeline pulled Trivy from apt without a pinned version.

Step 2: Steal publishing credentials. The compromised Trivy action exfiltrated LiteLLM's PYPI_PUBLISH token from the GitHub Actions runner environment. Standard integrity checks passed because the malicious packages were published using legitimate credentials, not injected after the fact.

Step 3: Ship the malware. Two LiteLLM versions were published with different injection techniques. Version 1.82.7 embedded base64-encoded payloads in proxy_server.py. Version 1.82.8 added a .pth file that executes on every Python interpreter startup with no import required.

Step 4: Harvest everything. The malware collected SSH keys, .env files, AWS/GCP/Azure credentials, Docker registry credentials, Kubernetes configurations, and cryptocurrency wallet data. Everything was AES-256-CBC encrypted with a hardcoded RSA public key, bundled, and uploaded to models.litellm[.]cloud.

Step 5: Spread. If Kubernetes credentials existed, the malware deployed privileged pods named node-setup-{node_name} across the cluster, mounting the host filesystem to install persistent access.

The discovery was almost accidental. Researcher Callum McMahon's machine crashed after installing LiteLLM because the malware had a bug that caused RAM exhaustion. As McMahon and AI researcher Andrej Karpathy both noted, the sloppiness of the code suggested it was "vibe coded." A better-written version would have run silently.

The Compliance Layer Was Already Broken

Separate from the technical attack, LiteLLM's compliance foundation was crumbling.

LiteLLM obtained its SOC 2 Type II and ISO 27001 certifications through Delve, a compliance automation startup founded by 21-year-old MIT dropouts. Delve graduated from Y Combinator in 2023 and raised a $32 million Series A at a $300 million valuation from Insight Partners.

The whistleblower allegations are severe:

Fabricated audit evidence

DeepDelver claims Delve generated auditor conclusions, test procedures, and final reports before any independent review occurred. The startup allegedly "inverts" the normal compliance structure by acting as both implementer and examiner.

Rubber-stamp auditors

Virtually all Delve clients reportedly went through two audit firms, Accorp and Gradient, which the whistleblower described as "part of the same operation." Both primarily operate in India with nominal US presence.

Impossible timelines

LiteLLM obtained both certifications in under 60 days. SOC 2 Type II audits typically take six months to a year because they require observing controls over a defined period. A 60-day timeline is not efficient automation. It is a red flag.

Delve's CEO denied the allegations, calling them "misleading" and describing Delve as an "automation platform" rather than an audit issuer. The whistleblower responded with alleged receipts including video and Slack messages.

The consequences extend beyond reputation. False HIPAA compliance can carry criminal liability. GDPR violations can result in fines up to 4% of global annual revenue or 20 million euros.

Why Compliance Badges Are Lagging Indicators

Even if LiteLLM's compliance had been legitimate, SOC 2 would not have caught this attack.

SOC 2 evaluates whether an organization has security processes: access controls, change management, monitoring procedures. It does not test whether a CI/CD pipeline pins its dependencies. It does not scan for compromised GitHub Actions. It does not detect credential exfiltration from a build runner.

This is the structural gap. Compliance frameworks audit policy. Supply chain attacks exploit implementation. The two operate on different planes.

The numbers show why this matters:

| Metric | Number | Source |

|---|---|---|

| Increase in large supply chain compromises since 2020 | ~4x | IBM X-Force 2026 |

| Third-party involvement in breaches (2024 to 2025) | 15% to 30% | IBM X-Force 2026 |

| Malware growth on open-source platforms (2025) | 73% | ReversingLabs 2026 |

| Malicious packages found across open-source registries | 512,847+ | ReversingLabs / Sonatype 2025 |

| Open-source AI/ML repos with critical CI/CD vulnerabilities | 70% | Mitiga 2026 |

| Average cost of a supply chain breach | $4.4M | IBM Cost of a Data Breach 2025 |

70% of open-source AI/ML repositories have critical or high-severity vulnerabilities in their GitHub Actions workflows. The LiteLLM attack is not an outlier. It is what happens when the statistics catch up with a specific project.

What Your Vendor Due Diligence Is Missing

Most vendor security reviews follow the same pattern: request the SOC 2 report, check the box, move on. That process assumes the audit was legitimate, the controls are still running, and the audit scope covers the risks you actually face.

All three assumptions failed in the LiteLLM case.

Here is what to add to your checklist:

1. Ask who audited them. Not which platform they used. Which audit firm signed the report? Can you find that firm independently? Do they have other recognizable clients?

2. Ask about their build pipeline. Do they pin dependency versions? Do they use reproducible builds? How is their CI/CD pipeline hardened? SOC 2 does not require answers to these questions.

3. Check the timeline. If a vendor obtained SOC 2 Type II in under 90 days, ask how. The "Type II" designation requires observing controls over a period, typically six to twelve months. If the observation period was 60 days, ask why.

4. Ask about detection, not prevention. How quickly can they detect a compromised release? LiteLLM's malicious versions were live for three hours. The detection came from an external researcher whose machine crashed. Ask what their mean time to detection looks like for a supply chain compromise.

5. Look at behavioral monitoring. The LiteLLM malware generated detectable signals: unusual outbound connections to models.litellm[.]cloud, credential reads across multiple cloud providers, file writes in site-packages/, and Kubernetes API calls deploying privileged pods. None of these signals were caught by compliance-level controls. They would have been caught by behavioral analysis that correlates across logs and network traffic.

What to Do This Week

-

Audit your CI/CD dependency pinning. Check every GitHub Action, build tool, and scanner in your pipeline for unpinned versions. The Trivy compromise worked because LiteLLM pulled the latest version automatically. Pin versions. Verify checksums.

-

Request the actual audit report, not the badge. SOC 2 reports are documents with specific scope, observation periods, and exceptions. If a vendor only shows you a badge on their website, you have not reviewed their compliance. You have reviewed their marketing.

-

Add behavioral baselines for outbound traffic. Know what normal outbound connection patterns look like for your critical services. Credential exfiltration to a newly registered domain is detectable if you have a baseline to compare against.

-

Review your supply chain for single points of failure. LiteLLM is a dependency for DSPy, MLflow, OpenHands, CrewAI, and many other frameworks. If you use any of them, you inherited this risk transitively. Map your AI dependency chain at least two levels deep.

-

Treat compliance as a floor, not a ceiling. A vendor's SOC 2 certification tells you they had certain processes at a point in time. It tells you nothing about whether those processes caught the specific threat targeting you right now.

The Real Lesson

The LiteLLM story is not about one startup getting hacked. It is about the distance between what compliance certifies and what actually happens in production.

A security scanner was compromised. Build credentials were stolen. Malicious packages were published using legitimate credentials. The compliance vendor that was supposed to verify the controls was allegedly fabricating evidence. And the detection came from a researcher whose laptop crashed because the malware had a bug.

Every link in that chain is a lesson. Pin your dependencies. Verify your auditors. Monitor for behavior, not just signatures. And stop treating a badge on a website as evidence of security.

The trust chain in your AI infrastructure is only as strong as its weakest link. Right now, most teams do not know where that link is.

What is SOC 2 Type II?

SOC 2 Type II is a security compliance framework that evaluates whether an organization's controls are designed effectively and operating consistently over a defined observation period, typically six to twelve months. It covers five trust service criteria: security, availability, processing integrity, confidentiality, and privacy.

What are the signs of a supply chain attack in production?

Unusual outbound connections from trusted processes, credential reads that deviate from baseline patterns, unexpected file writes in dependency directories, and DNS queries to recently registered domains. The LiteLLM malware performed all four. None triggered traditional alerting.

Should I stop using open-source AI tools?

No. But you should treat them as critical infrastructure, not convenience dependencies. Pin versions, verify checksums, monitor for behavioral anomalies, and track your transitive dependency chain. 70% of open-source AI/ML repositories have critical CI/CD vulnerabilities according to Mitiga's 2026 analysis.

How do I evaluate a compliance vendor's legitimacy?

Check which audit firm signed the report independently. Verify the observation period for Type II audits was at least six months. Ask whether the compliance platform generates evidence or merely organizes it. And check whether the vendor has disclosed any certifications that were later withdrawn or re-audited.

Is this only a risk for Python and PyPI ecosystems?

No. The same attack pattern applies to npm, Maven, Docker Hub, and any registry where publishing credentials flow through CI/CD pipelines. The ReversingLabs 2026 report identified over 512,000 malicious packages across all major open-source registries. Language and ecosystem are secondary. The attack vector is the build pipeline.

Behavioral Detection That Catches What Compliance Misses

TierZero correlates across your entire observability stack to surface the behavioral anomalies that supply chain attacks produce. Unusual credential access patterns, unexpected outbound connections, anomalous deployment activity. The signals that do not trigger a single alert rule but tell a story when connected.

Co-Founder & CEO at TierZero AI

Previously Director of Engineering at Niantic. CTO of Mayhem.gg (acq. Niantic). Owned social infrastructure for 50M+ daily players. Tech Lead for Meta Business Manager.

LinkedIn