Context Engineering Is What Comes After Prompt Engineering

Context engineering separates production AI agents from demos. Learn the four operations, four failure modes, and why 65% of enterprise AI failures trace back to context management.

Prompt engineering is stateless. Context engineering is stateful. The gap between them is where 88% of production AI agents die. Here is what the discipline looks like and why your team needs it.

Andrej Karpathy put it simply last year: "The LLM is a CPU. The context window is RAM. You are the operating system." That framing landed because it names the thing most teams building AI agents have not figured out yet.

Prompt engineering got you to the demo. It will not get you to production.

The discipline that separates agents that improve over time from agents that forget everything after every call has a name now: context engineering. And most engineering teams deploying agents in 2026 are still in the prompt engineering mindset.

Key Takeaways

The Shift: Stateless to Stateful

Adi Polak framed it on InfoQ this week: the industry is "moving from a stateless, chatbot era to a stateful, agentic approach."

Prompt engineering assumes each call starts from scratch. You write a system prompt, maybe add few-shot examples, and send it. The model has no memory of previous interactions, no accumulated knowledge, no sense of what it tried before. This works for chatbots. It breaks for anything that runs longer than a single request.

Context engineering is different. It asks: across dozens or hundreds of calls, what does this agent need to know right now? What should it remember from last week? What can it safely forget? What has it tried that failed?

Karpathy's endorsement of the term was characteristically direct: "People associate prompts with short task descriptions you'd give an LLM in your day-to-day use. When in every industrial-strength LLM app, context engineering is the delicate art and science of filling the context window with just the right information for the next step."

The job title is already gone. Fast Company reported that prompt engineering as a standalone role "has all but disappeared," with 68% of firms now providing it as standard training across all roles. What replaced it is harder. Context engineering is not a role you can post on a job board. It is a discipline your team either develops or gets stuck without.

Why Context Fails in Production

The demo worked. The pilot passed. The agent fell apart at scale. If this sounds familiar, you are in the majority.

| Metric | Number | Source |

|---|---|---|

| Enterprise AI failures from context drift or memory loss | 65% | Digital Applied 2026 |

| AI agents that fail to reach production | 88% | Digital Applied 2026 |

| Enterprises with pilots vs. production agents | 78% vs. 11% | Digital Applied 2026 |

| Multi-agent framework failure rates | 40-80% | MongoDB 2026 |

| Failures from inter-agent misalignment | 36.9% | MongoDB 2026 |

| Actual TCO vs. API-only cost estimates | 3.4x higher | Digital Applied 2026 |

| Organizations with comprehensive agent observability | 27% | Digital Applied 2026 |

That 88% failure rate hides the real story. 54% of failures occur in the 3-to-9-month window after initial pilot success. The agent worked in a controlled environment with clean data and short conversations. Then it hit production: noisy logs, contradictory runbooks, context windows that filled up mid-investigation.

The agents did not get dumber. Their context got worse.

The Four Ways Context Breaks

LangChain's analysis of production agent failures identifies four distinct context failure modes. Each one looks different and requires a different fix.

Context Poisoning

A hallucination enters the agent's memory. The next time the agent retrieves that memory, it treats the hallucination as fact. Future reasoning builds on the error. The feedback loop accelerates: each contaminated decision generates more contaminated memories. Without a consolidation layer that validates new information against existing knowledge, poisoning is inevitable over long-running sessions.

Context Distraction

Too much information in the context window. The model attends to irrelevant details and misses the signal. Chroma's 2025 research tested 18 frontier models and found every single one degrades as input length increases. The NoLiMa benchmark from LMU Munich and Adobe Research showed 11 out of 13 LLMs dropped below 50% of their baseline scores at just 32K tokens. GPT-4o fell from 99.3% to 69.7%.

Context Confusion

Irrelevant information influences the response. The model cannot distinguish between what matters and what is noise. This is the "lost in the middle" problem: LLMs attend well to the beginning and end of their context but poorly to the middle, causing 30%+ accuracy drops for information buried in long prompts.

Context Clash

Conflicting information exists in the same context window. A runbook says one thing. A Slack thread from last month says the opposite. The agent has no mechanism to resolve the contradiction, so it picks one arbitrarily or hedges. In incident investigation, where the agent correlates across logs, metrics, code, and documentation, clashing context produces investigations that contradict themselves.

What Production Context Engineering Looks Like

The Manus team published their lessons from building one of the most complex production agent systems. Their numbers are instructive.

Their agents run an average of 50 tool calls per task. The input-to-output token ratio is 100:1. That means for every token the agent generates, it consumes 100 tokens of context. With Claude Sonnet, the cost difference between cached and uncached tokens is 10x ($0.30 vs. $3.00 per million tokens).

At that ratio, context management is not an optimization. It is the architecture.

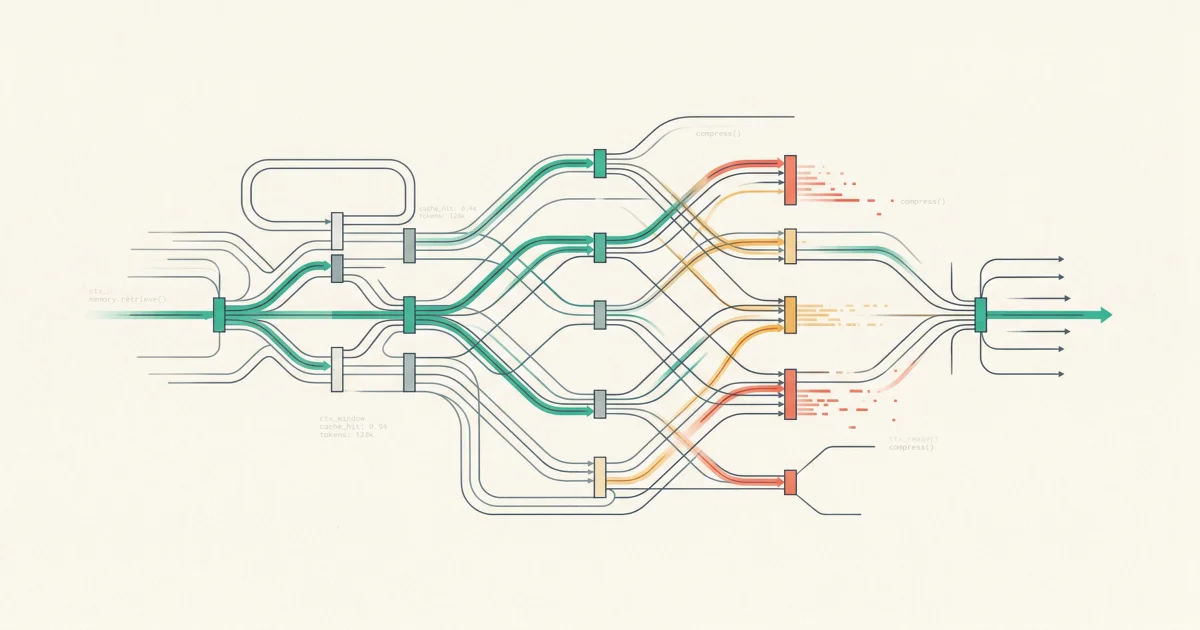

LangChain's framework breaks context engineering into four operations that every production agent system needs:

Write: Making context persistent

Agents need scratchpads for within-session state and long-term memory for cross-session learning. Polak's advice: once you solve a problem, "save it as a skill" so the agent does not re-derive the solution every time. The alternative is an agent that starts from zero on every investigation, making the same mistakes it already learned from last week.

Select: Retrieving the right context

Not everything belongs in the context window at once. Polak warned: "We don't want to load everything to my context. It is going to make more mistakes and cost more." RAG-based tool selection improves accuracy by approximately 3x according to LangChain's data. The retrieval pipeline decides which memories, documents, and tool definitions are relevant right now.

Compress: Fitting more signal into less space

Multi-agent systems can use up to 15x more tokens than single-agent chat, according to Anthropic. Recursive summarization, strategic token pruning, and hierarchical compression keep the context window usable as conversations grow. Claude Code triggers auto-compact summarization at 95% context utilization. Production agents need similar pressure valves.

Isolate: Preventing context contamination

Multi-agent architectures split context across specialized agents so that one agent's noise does not pollute another's reasoning. Manus uses what they call "context-aware state machines" that mask tool availability without invalidating the KV-cache. Sandboxed environments and structured state schemas prevent cross-contamination between parallel workflows.

The Operational Gap Nobody Staffed For

Here is the uncomfortable part. Context engineering requires a skill set that most ML and platform engineering teams do not have.

It is not prompt writing. It is information architecture at runtime: designing what an agent knows, how it retrieves knowledge, when it forgets, and how operators verify that its memory is not contaminated.

The numbers suggest this gap is structural, not temporary:

- 4.2 million global shortage of qualified agentic AI practitioners

- 12 months average time to develop internal expertise from zero

- 62% of infrastructure costs come from observability and orchestration, not model APIs

- Only 27% of organizations have comprehensive agent observability stacks

The teams that are closing this gap share a common pattern. They stop treating agent memory as a black box and start treating it as infrastructure that operators can inspect, edit, and audit.

Transparent memory is not a feature. It is the difference between an agent you can debug and an agent you can only restart.

What to Do This Week

-

Audit your agent's context lifecycle. Map what goes into the context window, how long it stays, and what triggers removal. If the answer is "we append everything and hope it fits," you have a context distraction problem waiting to happen.

-

Implement context compression before you hit the wall. Do not wait for your agent to exceed its context window mid-task. Build summarization and pruning into the agent loop. Track context utilization the same way you track CPU and memory.

-

Separate verified facts from inferred conclusions. If your agent's memory treats its own outputs the same as ground-truth data, you are one hallucination away from context poisoning. Label the provenance of every memory entry.

-

Make agent memory inspectable. If you cannot see what your agent remembers, you cannot debug why it made a bad decision. Build audit trails into the memory layer. Let operators edit and delete memories. A transparent, editable context engine is not optional for production.

-

Stop re-deriving solutions. When your agent solves a problem, persist the solution as a skill or playbook. The cost of re-investigation is not just tokens. It is the compounding context rot from re-exploring the same dead ends.

The Real Lesson

Prompt engineering was about crafting the perfect instruction. Context engineering is about designing the information architecture that makes every instruction work.

The teams that figure this out will build agents that get smarter with every incident, every investigation, every resolved question. The teams that do not will keep rebuilding the same agent from scratch, wondering why the demo always works better than production.

The context window is not just memory. It is everything your agent knows, everything it has learned, and everything it is about to forget. Engineering that window is the job now.

What is context engineering?

Context engineering is the discipline of designing how AI agents acquire, store, retrieve, compress, and discard information across sessions. Unlike prompt engineering, which optimizes a single model call, context engineering optimizes the entire information lifecycle of an agent system running in production.

How does context rot affect production agents?

Context rot is the measurable degradation in model output quality as context length increases. Every frontier model tested shows this effect. For production agents running multi-step investigations, context rot means the agent's accuracy drops as the investigation progresses. Compression, summarization, and selective retrieval are the primary mitigations.

What is the difference between context engineering and RAG?

RAG (Retrieval-Augmented Generation) is one component of context engineering, specifically the "Select" operation. Context engineering is broader: it includes how information is persisted (Write), how it is compressed (Compress), and how it is isolated across agents (Isolate). RAG answers "what to retrieve." Context engineering answers "what to remember, retrieve, compress, and forget."

Can larger context windows solve context engineering challenges?

No. Larger context windows reduce the frequency of overflow but do not solve context rot, poisoning, or distraction. The NoLiMa benchmark showed accuracy degradation starting at 32K tokens in most models, well within the advertised limits of modern LLMs. The problem is not window size. It is what you put in the window.

How do I measure context quality in production?

Track four metrics: context utilization (percentage of window used), cache hit rate (how often retrieved context matches what the model needs), memory freshness (age distribution of facts in the context window), and contradiction rate (how often conflicting information appears in the same context). These are leading indicators of agent degradation.

Context Engineering That Shows Its Work

TierZero's Context Engine uses hybrid search, graph traversal, and investigation replay to give production agents the right context at the right time. Every memory is visible, editable, and auditable. Not a black box.

Co-Founder & CEO at TierZero AI

Previously Director of Engineering at Niantic. CTO of Mayhem.gg (acq. Niantic). Owned social infrastructure for 50M+ daily players. Tech Lead for Meta Business Manager.

LinkedIn